Experience Extended | MLOps Planogram

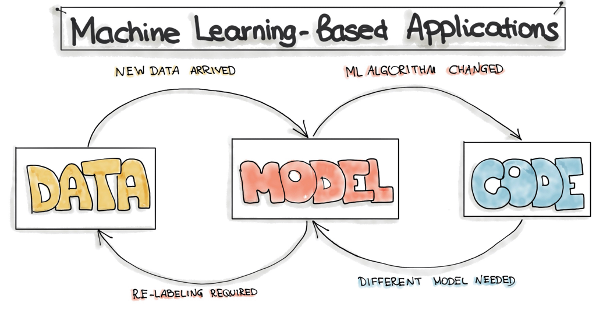

There’s a primary difference between machine learning and deep learning applications and other types of software applications: changes can come from multiple sources in ML. Traditional software changes only come from its code; ML application changes can come from the code, the model, or even the data. Thus, using purely Agile methods isn’t ideal for ML.

Source: MLOps: Motivation

This is where MLOps come in. It incorporates ML and data as key players in the ecosystem, rather than just as variants of traditional software components. Let’s look at a planogram of an ML project for details.

Levels of Enablement

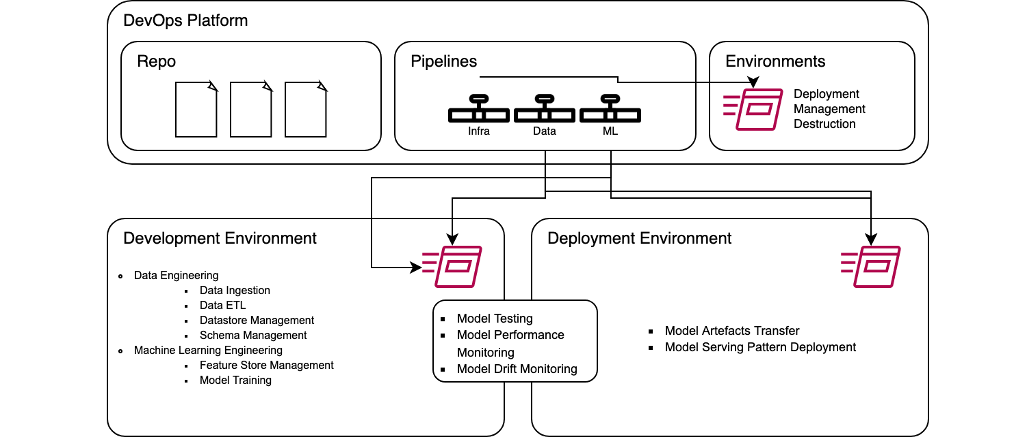

This planogram includes a mix of generic and peculiar fragments where MLOps is integrated to:

- Unify the development cycle.

- Automate artifact gathering and testing.

- Enable continuous integration, training, etc.

Below is the high-level mapping of fragments and MLOps as an ingredient.

| Project Fragment | MLOps ingredient |

| Infrastructure & Configuration | Infra-as-Code (IoC) |

| Data Engineering Operations | Continuous Integration |

| Machine Learning Operations | Continuous Integration, Training, and Testing |

| Deployment & Monitoring | CX |

On-Ground Operations

It is always good to map theoretical logic to on-ground operations. Below we can see what on-ground operations are being performed inside a theoretical MLOps ingredient:

- MLOps theoretical ingredient

- Project fragment

- On ground operation

- Project fragment

- Infrastructure

- Deployment

- Management

- Destruction

- Continuous Integration

- Data Engineering

- Data Ingestion

- Data ETL

- Datastore Management

- Schema Management

- Machine Learning Engineering

- Feature Store Management

- Model Training

- Continuous Testing

- Model Testing

- Model Performance Monitoring

- Model Drift Monitoring

- Continuous Deployment

- Model Artefacts Transfer

- Model Serving Pattern Deployment

- Data Engineering

Physical Lens

A next-generation text stack is a must for speedy MLOps implementation. For the planogram, we have a live-time implementation of the following tech stack:

Source: Absolutdata

Conclusion

- To test, deploy, manage, and monitor ML models in actual production, we must build best practices that account for machine learning and AI in software products and services.

- Implementing MLOps helps us prevent technical debt in Machine Learning applications.

- Data, models, and other ML assets must be given the same status as other “traditional” elements in the software development lifecycle.

- Machine Learning models should be included in a unified release process.

References

Authored by: Sumit Tyagi, Data Scientist at Absolutdata